Last Updated on 19/06/2019 by TDH Publishing (A)

It is now possible to take a talking-head style video, as well as add, delete, or edit the speaker’s words as simply as you would edit the text in a word processor. Anew deepfake software lets you do these changes from a transcript of a video, and these changes are reflected seamlessly in the video.

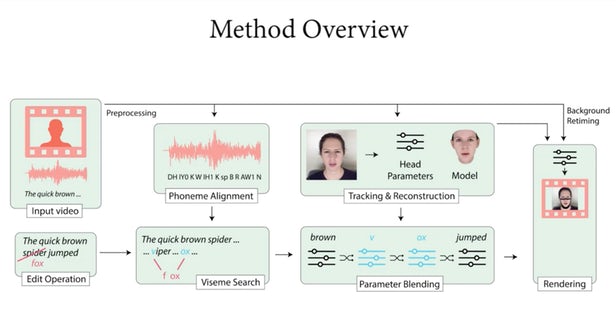

This new algorithm can process the audio or video into a new file in which the speaker says everything that you wish. This work is a collaboration of teams from Stanford University, Max Planck Institute for Informatics, Princeton University and Adobe Research who say that this software would help to build a perfect environment to cut down on expensive re-shoots when an actor gets something wrong, or when a script needs some changes.

In order to understand the face movements of a speaker, the algorithm requires a 40-minute training video which would provide a chance to work out exactly what face shapes the subject is making for each phonetic syllable in the original script. Once you edit a script, the algorithm then creates a 3D model of the face making the required new shapes. And from there, a new machine learning technique called Neural Rendering can paint the 3D model with photo-realistic textures in order to make it look different from the real thing.

Well, if you want to generate a speaker’s audio along with the video, then you can use software like VoCo. even this algorithm has the same approach by breaking down a heap of training audio into phonemes and then using this dataset to new words in a familiar voice. The team is pretty much aware of the potential its software has for unethical uses, though the world has yet to be hit by its first great deepfake scandal.

The research team behind this new software makes some feeble attempts to deal with its potential misuse, proffering a solution wherein anyone who uses this software can optionally watermark it as fake, and provide “a full ledger of edits”. The team also suggests making use of better forensics like digital or non-digital fingerprinting techniques to identify whether a particular video has been manipulated for ulterior purposes. So the better these systems get at automatically spotting fake videos, the better the fakes will become.